AI Supercomputers & Specialized Chips: Powering the Next Innovation Wave

1:Introduction: Why Hardware Defines the AI Era

Artificial Intelligence has entered a defining phase where progress is no longer constrained by algorithms alone. While breakthroughs in deep learning, generative AI, and reinforcement learning continue, the true bottleneck—and opportunity—lies in computing power. As AI models scale into hundreds of billions and even trillions of parameters, conventional hardware architectures are pushed to their limits.

In response, AI supercomputers and specialized chips have emerged as the foundation of the next innovation wave. These systems enable organizations to train massive models, deploy real-time inference at scale, and unlock new capabilities across science, industry, and consumer applications. By 2026, AI hardware is no longer a background enabler—it is a strategic differentiator.

This article explores how AI supercomputers and specialized chips are reshaping large-scale AI deployment, the architectures driving this transformation, key use cases, challenges, and what the future holds for AI-driven economies.

2:Understanding AI Supercomputers

a:What Is an AI Supercomputer?

An AI supercomputer is a high-performance computing (HPC) system purpose-built for artificial intelligence workloads. Unlike traditional supercomputers optimized for numerical simulations, AI supercomputers focus on parallel data processing, accelerated matrix operations, and rapid model training.

Core objectives of AI supercomputers include:

Training large language and multimodal models

Running inference at massive scale

Supporting data-intensive AI research

Enabling enterprise-grade AI applications

These systems are designed to handle workloads that would be impractical or impossible on conventional infrastructure.

b:Key Components of AI Supercomputers

AI supercomputers integrate several advanced components:

Accelerators: GPUs, TPUs, or custom AI ASICs

High-Bandwidth Memory (HBM): To reduce data access latency

High-Speed Interconnects: NVLink, InfiniBand, or proprietary fabrics

Distributed Storage: Supporting petabyte-scale datasets

Advanced Cooling Systems: Liquid and immersion cooling

Together, these components enable unprecedented computational throughput and scalability.

3:The Evolution of Specialized AI Chips

a:Why CPUs Alone Are Insufficient

Central Processing Units (CPUs) are designed for general-purpose computing, prioritizing flexibility over parallelism. Modern AI workloads, however, require executing millions of operations simultaneously.

Key CPU limitations include:

Limited parallel execution units

High energy consumption per AI operation

Memory bandwidth bottlenecks

These constraints drove the development of specialized AI accelerators.

b:Major Categories of AI Chips

Graphics Processing Units (GPUs)

GPUs remain the dominant platform for AI training. Their strengths include:

Massive parallel processing cores

Optimized tensor and matrix operations

Strong software ecosystem support

GPUs are widely used in hyperscale data centers and enterprise AI platforms.

c:Tensor Processing Units (TPUs)

TPUs are application-specific integrated circuits designed exclusively for machine learning workloads. They offer:

High efficiency for deep learning tasks

Tight integration with AI frameworks

Lower power consumption per computation

TPUs excel in large-scale training and inference environments.

d:AI Accelerators and NPUs

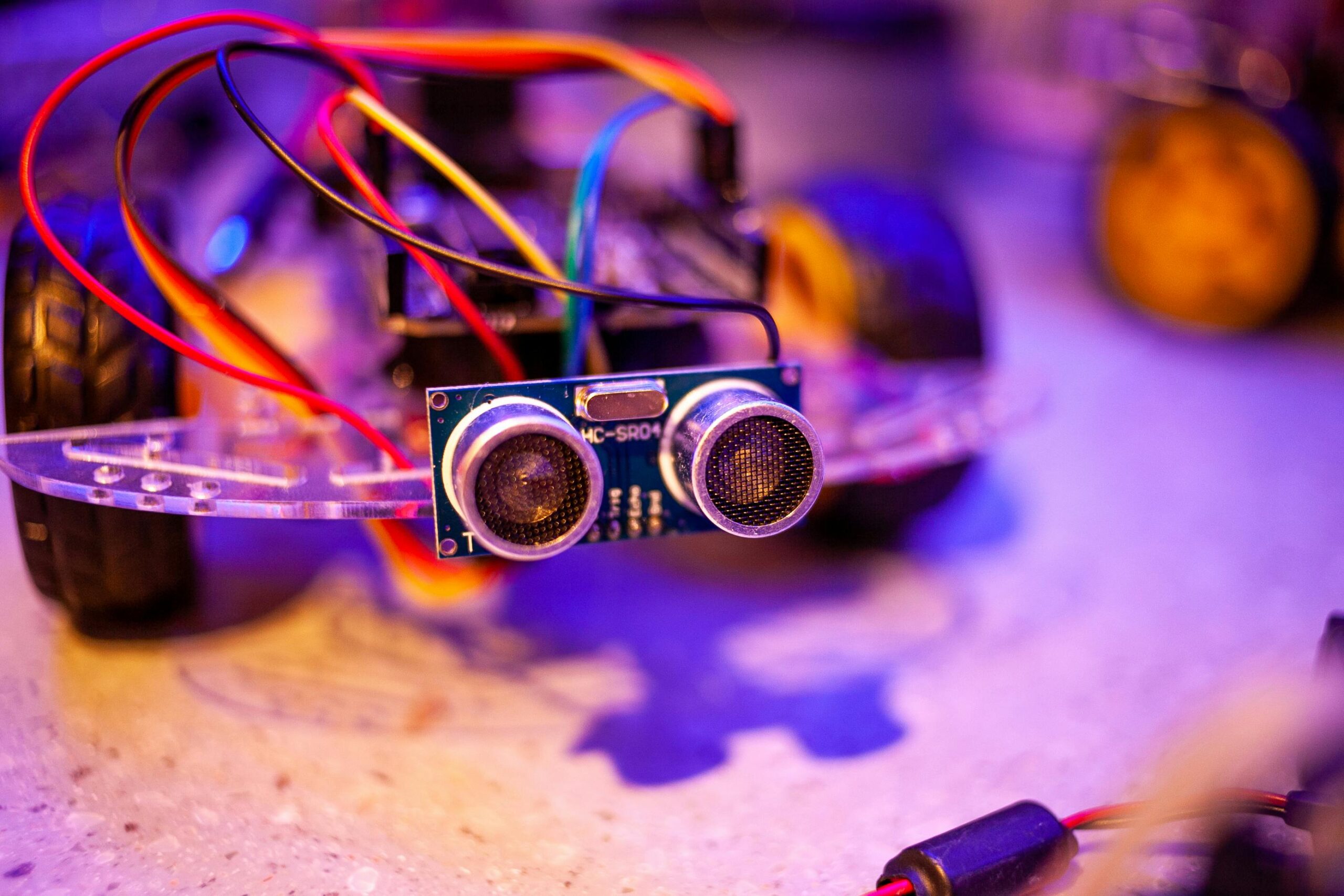

Custom accelerators and Neural Processing Units (NPUs) are optimized for specific AI tasks, particularly inference. They are commonly deployed in:

Smartphones and consumer electronics

Autonomous vehicles

Industrial and edge AI systems

These chips enable real-time AI processing with minimal latency.

4:Emerging Hardware Architectures Powering Large-Scale AI

a:Chiplet-Based Design

Traditional monolithic chips face manufacturing and scalability challenges. Chiplet-based architectures address this by combining smaller dies into a single package.

Benefits include:

Improved manufacturing yields

Greater design flexibility

Faster innovation cycles

Chiplets are now central to next-generation AI processors.

b:Heterogeneous Computing Architectures

Modern AI systems rely on heterogeneous computing, combining:

CPUs for control and orchestration

GPUs or accelerators for computation

Specialized networking and memory controllers

This approach maximizes performance and resource efficiency.

c:Memory-Centric Computing

As AI models grow, memory bandwidth becomes a critical bottleneck. New architectures aim to reduce data movement through:

High-bandwidth memory stacks

Near-memory and in-memory computing

Unified memory architectures

These innovations significantly improve performance and energy efficiency.

5:AI Supercomputers in Real-World Deployment

a:Training Large Language Models

AI supercomputers enable the training of massive models by distributing workloads across thousands of accelerators. This allows:

Faster training cycles

Improved model convergence

Reduced time-to-market for AI solutions

Large-scale training that once took months can now be completed in weeks or days.

b:Scientific Research and Discovery

In scientific domains, AI supercomputers accelerate:

Drug discovery and protein structure prediction

Climate modeling and weather forecasting

Materials science and semiconductor research

AI-driven simulations reduce experimentation costs and unlock new discoveries.

c:Enterprise and Industrial Applications

Enterprises deploy AI supercomputers for:

Predictive maintenance

Supply chain optimization

Financial risk modeling

Fraud detection and cybersecurity

These systems transform raw data into actionable insights at scale.

6:Energy Efficiency and Sustainability

a:The Growing Energy Challenge

AI supercomputers consume significant power, raising concerns about:

Operational costs

Environmental impact

Data center capacity limits

Energy efficiency is now a core design requirement.

b:Innovations in Sustainable AI Hardware

Key sustainability initiatives include:

Energy-efficient chip architectures

Advanced liquid and immersion cooling

AI-driven power management

Integration of renewable energy sources

Sustainable AI infrastructure is becoming a competitive advantage.

7:Global Competition and the AI Chip Race

a:AI Hardware as a Strategic Asset

Governments and corporations view AI hardware as critical infrastructure. Investments focus on:

Domestic semiconductor manufacturing

Secure supply chains

National AI capabilities

b:Supply Chain Resilience

The industry is diversifying production and sourcing to reduce geopolitical and operational risks, reshaping the global semiconductor landscape.

8:Edge AI and Distributed Intelligence

a:The Rise of Edge AI Hardware

Not all AI workloads run in centralized data centers. Edge AI hardware enables:

Real-time decision-making

Reduced latency

Enhanced data privacy

Applications include autonomous systems, smart factories, and healthcare devices.

b:Hybrid Cloud-Edge AI Models

Future AI deployments blend:

Cloud-based training

Edge-based inference

This hybrid approach balances performance, cost, and responsiveness.

9:Software–Hardware Co-Design

a:Why Co-Design Matters

Optimizing AI performance requires aligning software with hardware capabilities. Benefits include:

Improved efficiency

Faster development cycles

Reduced deployment complexity

b:AI Compilers and Frameworks

Modern compilers automatically optimize models for target hardware, enabling developers to focus on innovation rather than low-level tuning.

10:Future Directions Beyond 2026

a:Neuromorphic and Quantum Computing

Emerging paradigms such as neuromorphic chips and quantum accelerators aim to:

Mimic brain-like efficiency

Solve problems beyond classical computing

While still experimental, they represent long-term disruption.

b:From Scale to Efficiency

The next wave of AI hardware will prioritize:

Smarter, more efficient models

Adaptive and context-aware hardware

Sustainable computing at scale

11:Conclusion: Hardware as the Engine of AI Innovation

AI supercomputers and specialized chips form the backbone of modern artificial intelligence. As AI applications expand across industries, hardware innovation determines what is possible, scalable, and sustainable.

By enabling faster training, real-time inference, and energy-efficient deployment, emerging hardware architectures are powering the next innovation wave. Organizations that invest strategically in AI infrastructure today will shape the future of technology, industry, and society.

Leave a Reply